It’s May 14, 2027. Your company experienced a data breach three weeks ago that exposed customer names, phone numbers, and payment details. Your team patched the vulnerability, changed a few passwords, and sent an internal email saying “handled.”

Nobody told the Data Protection Board. Nobody notified the affected customers. There was no formal incident response. This is an internal email, and I hope no one noticed.

The Data Protection Board noticed. And now you’re looking at a penalty notice for ₹250 crore, for failing to implement reasonable security safeguards. Another ₹200 crore for not reporting the breach. All because a law that’s been on the horizon for years finally has teeth, and your organization wasn’t ready when it bit. This isn’t a hypothetical designed to scare you. It’s the exact scenario the DPDP Act was designed to address. And May 13, 2027, is the date those consequences switch on.

The Clock Is Already Running

India’s Digital Personal Data Protection Act was passed in August 2023. The operational rules were notified in November 2025. That notification started an 18-month countdown to full enforcement.

We are inside that window right now. May 13, 2027, is the date on which every substantive obligation under the Act becomes simultaneously enforceable: Consent mechanisms, Privacy notices, Breach reporting systems, Data retention policies, User rights, and Children’s data protection – all of it, at once, with no grace period after the deadline.

Here’s the part most businesses haven’t properly absorbed: the 18-month window wasn’t meant for waiting; it was meant for building. The period from November 2025 to May 2027 is intended to be spent creating the systems, controls, contracts, and governance structures that compliance actually requires.

Most enterprises we speak with are treating May 2027 as a start date. That’s exactly the backward direction.

Who does this apply to? Almost Everyone

Before some readers convince themselves this doesn’t apply to them, let’s be clear about scope. The DPDP Act applies to any organization, regardless of size or sector, that processes the digital personal data of individuals in India. Personal data is broadly defined as Names, Phone numbers, Email addresses, IP addresses, Device identifiers, Financial data, Health information, Behavioral data, and Cookies.

If you run an e-commerce platform, a fintech app, a healthcare service, an HR system, a SaaS product, a logistics operation, or an edtech platform, you’re in scope. If you’re a B2B company that stores client contacts in a CRM, you’re in scope. If you’re a startup with a few thousand users, you’re in scope. And here’s what catches international businesses off guard: the Act has extraterritorial reach. If you’re a company headquartered outside India but offering goods or services to Indian users, the law applies to you too, just like its friend in the European region, GDPR. The DPDP Act follows the data, not the geography of whoever holds it. The honest question isn’t “does this apply to us?” For most businesses, it does. The question is what you’re required to do about it, and whether you’ve started.

What the Law Actually Requires

A lot of DPDP “compliance” being done right now is surface-level. A revised privacy policy, A new cookie banner, A legal team sign-off, that’s not compliance, that’s theatre. It will fail the first real audit. Here’s what the Act actually requires, in plain terms.

1) ‘Consent means something.’ The DPDP Act doesn’t accept the kind of consent most Indian businesses currently collect, such as bundled consent (a single checkbox covering multiple purposes), which is invalid. Each processing purpose needs its own specific, informed, unambiguous consent. If you collect data for personalization, analytics, and marketing, that’s three separate consents. If you haven’t redesigned your consent flows yet, this alone is a significant piece of work.

2) Privacy notices that are actually readable. Notices must be standalone documents, separate from your terms and conditions, written in plain language, available in English and regional languages, clearly explaining what data you collect, why you collect it, and what rights the user has. Most current privacy policies don’t meet this bar.

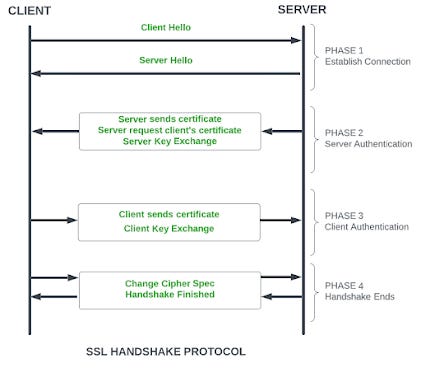

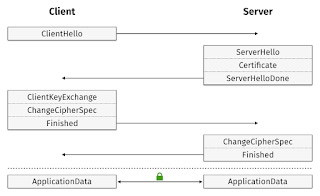

3) A 72-hour breach clock. When a data breach occurs, you have 72 hours to notify the Data Protection Board. Neither to investigate nor to decide whether it’s serious enough to report or to notify. That requires an incident response process capable of identifying, assessing, and escalating a breach within hours, not days, not the exact Indian Stretechable Time zone activity. Most Indian organizations lack a tested incident response plan.

4) Real infrastructure for user rights. Individuals can request to see their data, correct it, or have it deleted. They can nominate someone else to exercise those rights on their behalf. You need a process to receive these requests, verify the person, fulfill the request across every internal system that holds their data, and complete the whole thing within 90 days. Building that end-to-end capability is harder than it sounds, especially when data lives across a product database, a CRM, a support system, and three SaaS tools.

5) Vendor contracts that reflect the new reality. Every third party that processes personal data on your behalf, like cloud providers, analytics tools, payment processors, and support platforms, must now carry compliance obligations. Most contracts signed before 2025 don’t include the security clauses required by the DPDP Act. That means reviewing and renegotiating contracts. Not a handful, every processor in your chain.

6) A named grievance officer. A contact point, whether a person or team, must be publicly listed on your website or app before May 2027. Complaints from users must be addressed. This sounds simple. It requires a working complaints process behind it to mean anything.

The Penalties And Why the Fine Might Not Be the Worst Part

Let’s look at the numbers, because they matter when we talk about Business.

- ₹250 crore, for failing to implement reasonable security safeguards

- ₹200 crore, for failing to notify the Board or affected individuals of a breach

- ₹150 crore, for violations involving children’s data

- ₹50 crore, for other breaches of Data Fiduciary obligations

These are per-violation figures. A single data breach incident can trigger multiple violations simultaneously, inadequate security, failure to notify individuals, and failure to notify the Board. Cumulative exposure from one incident can exceed ₹650 crore.

But here’s the thing most people miss when they focus on the fines: the Data Protection Board has the authority to order a halt on data processing while an investigation is underway. For a bank, for a payments platform, for any business where data processing isn’t a supporting function, it’s the product (a sigh of relief or tension that again it is the government aristocracy under the veil of y (democracy)). An operational suspension isn’t a fine you can absorb. It’s a threat to the business itself.

The fine you can budget for. The suspension you can’t always survive.

Why “We’ll Sort It Before the Deadline” Doesn’t Work

The organizations that implemented GDPR know exactly how this plays out. The timeline that looks comfortable eighteen months out compresses dramatically once the actual work begins. Data mapping alone, going system by system to understand what personal data you hold, where it lives, who touches it, and why, takes weeks, not days. And you can’t build compliant consent flows, privacy notices, or user rights infrastructure without it. Everything downstream depends on it. Then come the vendor reviews, then the technical implementation of consent management, security hardening, breach notification protocols, then the user rights infrastructure, then testing, validation, staff training, and much more, just a complete PDCA cycle(Plan-Do-Check-Act)

Each of these is a project. They can run in parallel — but they can’t all start in March 2027. Organizations that begin serious implementation work after mid-2026 will be operating under severe time compression. Some won’t make it.

The correct starting point is not a revised privacy policy. It involves appointing a compliance owner with actual authority, mapping your data, assessing your gaps against the specific obligations that apply to your business, and beginning structured implementation in that order.

The Opportunity Nobody Talks About

Here’s the thing about the DPDP Act that doesn’t get said enough. It’s not just a compliance burden, it’s an opportunity to do something your customers will actually notice and value: give them genuine control and transparency over their own data.

The businesses that approach this honestly, building systems that actually work rather than ones that look compliant enough to pass an audit, will emerge from this with something real and more trust. Better data hygiene. Stronger processes. A privacy posture that holds up as regulation tightens globally, because India won’t be the last place to legislate on this.

Those treating it as a box to tick will find themselves scrambling when enforcement begins. And scrambling is expensive, or we can say far more expensive than building it right the first time.

Where Does Your Organization Stand?

Some honest questions worth answering, not for anyone else, just for yourself:

- Do you know exactly what personal data your organization holds, where it lives, and why you have it?

- Have your consent flows been redesigned for DPDP, separate, specific, purpose-by-purpose consent?

- Do you have an incident response process capable of moving at 72-hour speed?

- Have your vendor contracts been reviewed against DPDP requirements?

- Is there a named person in your organization with the authority and budget to get this done?

If the answers are unclear, that’s your signal. Not to panic, but to start. The deadline is fixed, the work is substantial, and the window is narrowing by the day.

May 13, 2027, is not a target date. It’s a cutoff. The businesses that will be fine on May 14, 2027, are those that started in 2026, not those that haven’t even started the scope.